2 April 2026, Google DeepMind has released something that deserves your attention, even if you're not a developer. Gemma 4 is a family of open, free, multimodal and commercially free-to-use artificial intelligence models. In other words, anyone can download them, modify them, integrate them into a paying product and redistribute them without asking Google for permission. This reality, in a sector where the most powerful models remain locked behind paying APIs and restrictive conditions of use, represents a concrete paradigm shift.

It's not just another announcement in the never-ending stream of model releases. IA. It's a strategic decision that puts Google back in the race for open artificial intelligence, and changes the rules of the game for developers, businesses and, indirectly, for you.

What Gemma 4 really is

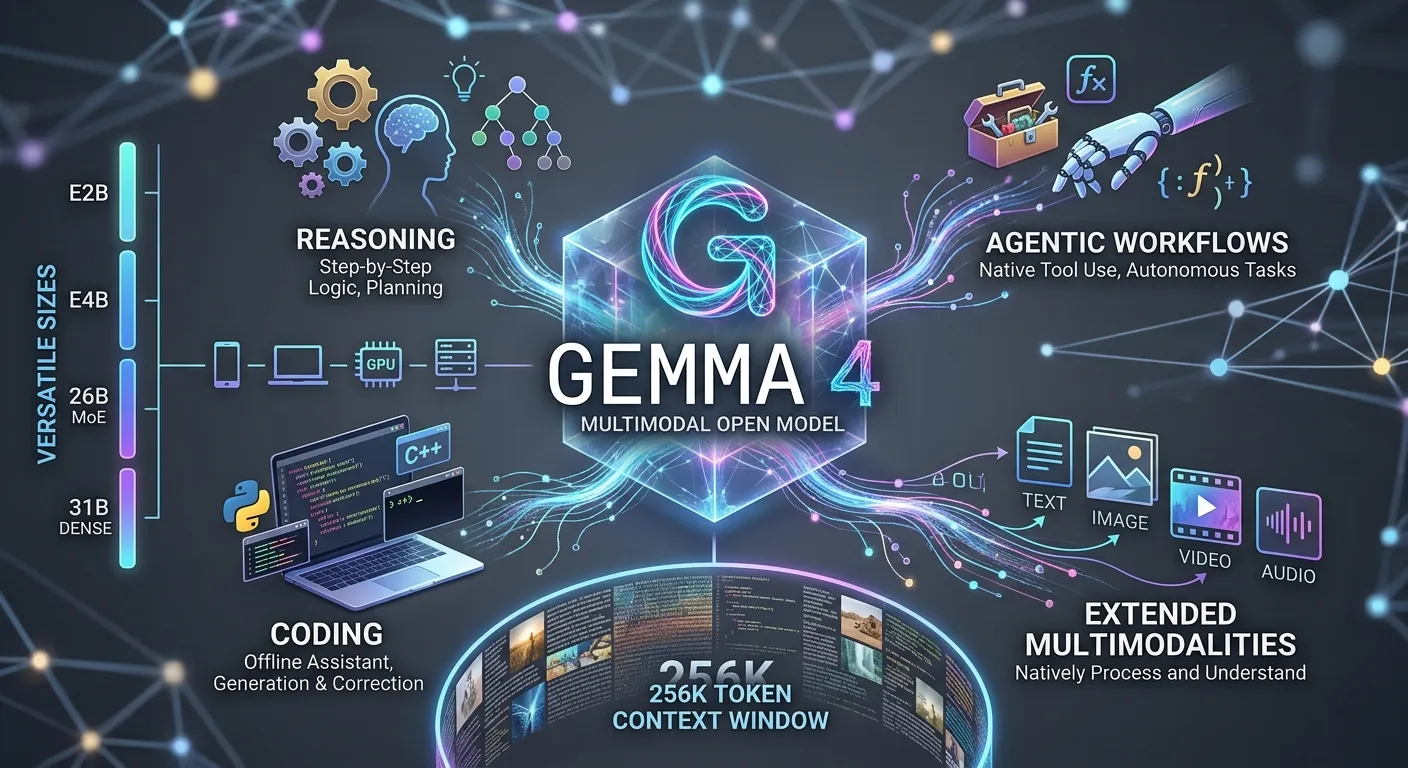

Gemma 4 is not a single model. It's a family of four models with distinct sizes and uses. The model E2B, or Effective 2 Billion, and the E4B model are designed to run directly on mobile devices, Raspberry Pi or consumer laptops. The Mixture of Experts 26B and 31B Dense models, on the other hand, are designed for professional deployment on GPUs or in the cloud.

What this diversity of sizes means for you is that Gemma 4 is not aimed at just one type of user. It targets all profiles, from the independent developer who wants to run a model locally without an internet connection, to the large enterprise that deploys AI on sovereign cloud infrastructures with strict compliance guarantees.

According to Google DeepMind's official documentation, Gemma 4 supports context windows of up to 256,000 tokens for models 26B and 31B. To give you an order of magnitude, that's around 200,000 words of text processed in a single run. That's a lot of text. This means you can analyse long documents, entire code bases or complete transcripts without losing the thread of your reasoning.

Gemma 4 and the Apache 2.0 licence: why it's more important than it seems

The licence under which a model is published is often seen as a technical detail. It is not. It is one of the most structuring decisions an AI laboratory can make.

Gemma 4 is published under the Apache 2.0 licence. In practical terms, this means that you can use these models in a commercial product without paying royalties, modify them freely, redistribute them and integrate them into your own services. The only requirement is that you retain the original copyright notice if you distribute the template.

Compare this to Meta's Llama models, which impose restrictions on commercial use above certain thresholds of monthly active users, or OpenAI and Anthropic's proprietary models, which are only accessible via APIs billed on a pay-per-use basis. Gemma 4 removes these barriers. For a start-up looking to build an AI product without depending on an external supplier or exposing itself to unpredictable variable costs, this freedom has real and immediate economic value.

Native multimodality: seeing, hearing, reading and reasoning

One of the most significant technical breaks with previous generations of Gemma 4 is its native multimodality. Previous versions of Gemma were exclusively text-based. Gemma 4 natively handles text, images, video and, for the E2B and E4B models, audio.

This is not a feature added on the surface. According to Google AI's technical documentation, vision and audio processing are integrated directly into the model's weights, and not grafted on via an external pipeline. This architectural difference has important practical consequences: performance on multimodal tasks is more consistent, latency is reduced and model behaviour is more predictable.

In concrete terms, Gemma 4 can analyse PDF documents, read interface screenshots, recognise handwritten text, understand graphics, detect objects in images and summarise videos by processing image sequences. For a developer building a document assistance application, a visual intelligence tool or an autonomous agent, these capabilities change what is feasible without recourse to specialist external services.

Gemma 4 and step-by-step reasoning

One of the least visible but most significant advances in Gemma 4 is its integrated reasoning mode. This mode allows the model to produce an intermediate chain of reasoning before formulating its final answer.

This approach, inspired by the work on chain-of-thought prompting published in particular by Wei et al. in their 2022 article in the NeurIPS proceedings, significantly improves performance on complex logic, mathematics and multi-step planning tasks. The observable difference for you, as a user or developer, is a model that makes fewer errors on problems that require several stages of thought, and that can explain its reasoning transparently.

Gemma 4 on your phone: offline AI becomes a reality

One of the most striking aspects of this release is Gemma 4's ability to run completely offline on popular mobile devices. The E2B and E4B models respectively require a minimum of 4GB of RAM to operate, which is the configuration of most modern smartphones.

Google has announced a developer pre-release of AICore, a system integrated into Android that allows applications to access Gemma 4 directly from the operating system, without using the internet. Android developers can now prototype agentic workflows using this infrastructure and prepare applications compatible with future devices equipped with Gemini Nano 4.

For you, this means that a personal assistance, translation, document analysis or content generation application will soon be able to run on your phone without sending your data to an external server. Confidentiality is becoming a structural feature, not a marketing argument.

What Gemma 4 changes for businesses

Organisations handling sensitive data have long been faced with a difficult dilemma. Either they accept sending their data to third-party cloud APIs, with all the compliance risks that this entails, or they give up on the most efficient models because they are unable to deploy them on their own infrastructure.

Gemma 4 changes this equation. According to the official Google Cloud blog, the model is available on Vertex AI with compliance guarantees including sovereign cloud solutions. Companies can deploy Gemma 4 in their own Google Cloud environment, fully control their data and benefit from fine-tuning capabilities on their own business corpora via Vertex AI Training Clusters.

Gemma 4's native calling function, i.e. its ability to invoke external tools in a structured way, makes it possible to build AI agents capable of interacting with internal systems, databases or business APIs without depending on an external supplier. This represents a structural change in the way companies can approach the intelligent automation of their processes.

Gemma 4 and its competitors: the need for honesty

It would be inaccurate to present Gemma 4 as a model without limits. On certain reasoning and code generation benchmarks, the proprietary OpenAI and Anthropic models retain measurable advantages. The 31B Dense version of Gemma 4 reached third place in the Arena.ai ranking at the time of its release, which is remarkable for an open source model of its size, but does not mean that it systematically outperforms GPT-4o or Claude 3.7 on all tasks.

But that's the wrong way to put the question. The real relevant comparison is not «Is Gemma 4 better than GPT-4o?» but «Does Gemma 4 offer a sufficient level of performance for your use case, with a freedom of use and control over data that GPT-4o cannot offer?» For a large number of real-life use cases, the answer is yes.

What the 400 million downloads say about the Gemma ecosystem

Since the launch of the first version of Gemma in February 2024, the family's models have been downloaded more than 400 million times, according to Google DeepMind. More than 100,000 variants have been created by the community and published on platforms such as Hugging Face. This figure is not anecdotal. It bears witness to a living ecosystem, with real adoption by developers who are building concrete things with these models.

Gemma 4 arrives in this ecosystem with native support from day one for Hugging Face Transformers, llama.cpp, Ollama, vLLM, MLX, LM Studio and a dozen other tools that developers use every day. You don't have to wait weeks for the community to adapt the model to your favourite tools. It works immediately in the environments you already know.

What this means for the future

Google is not releasing Gemma 4 out of altruism. It's a strategic decision that aims to anchor its cloud infrastructure, development tools and Android ecosystem at the centre of the next wave of AI applications. But a company's motives and the value it creates for its users are not mutually exclusive.

What Gemma 4 represents in concrete terms is a moment when the boundary between accessible AI and cutting-edge AI has shrunk even further. Capabilities that were once reserved for large organisations with substantial API budgets are now available to anyone with a computer, an internet connection and curiosity.

You don't need to be a Google engineer to use what Google has built. This is perhaps the most profound change that Gemma 4 introduces.